📊 Read or skip? Read. If you are still measuring success in uptime, velocity, or tickets closed — you are likely missing where value is actually created.

Why this, why now

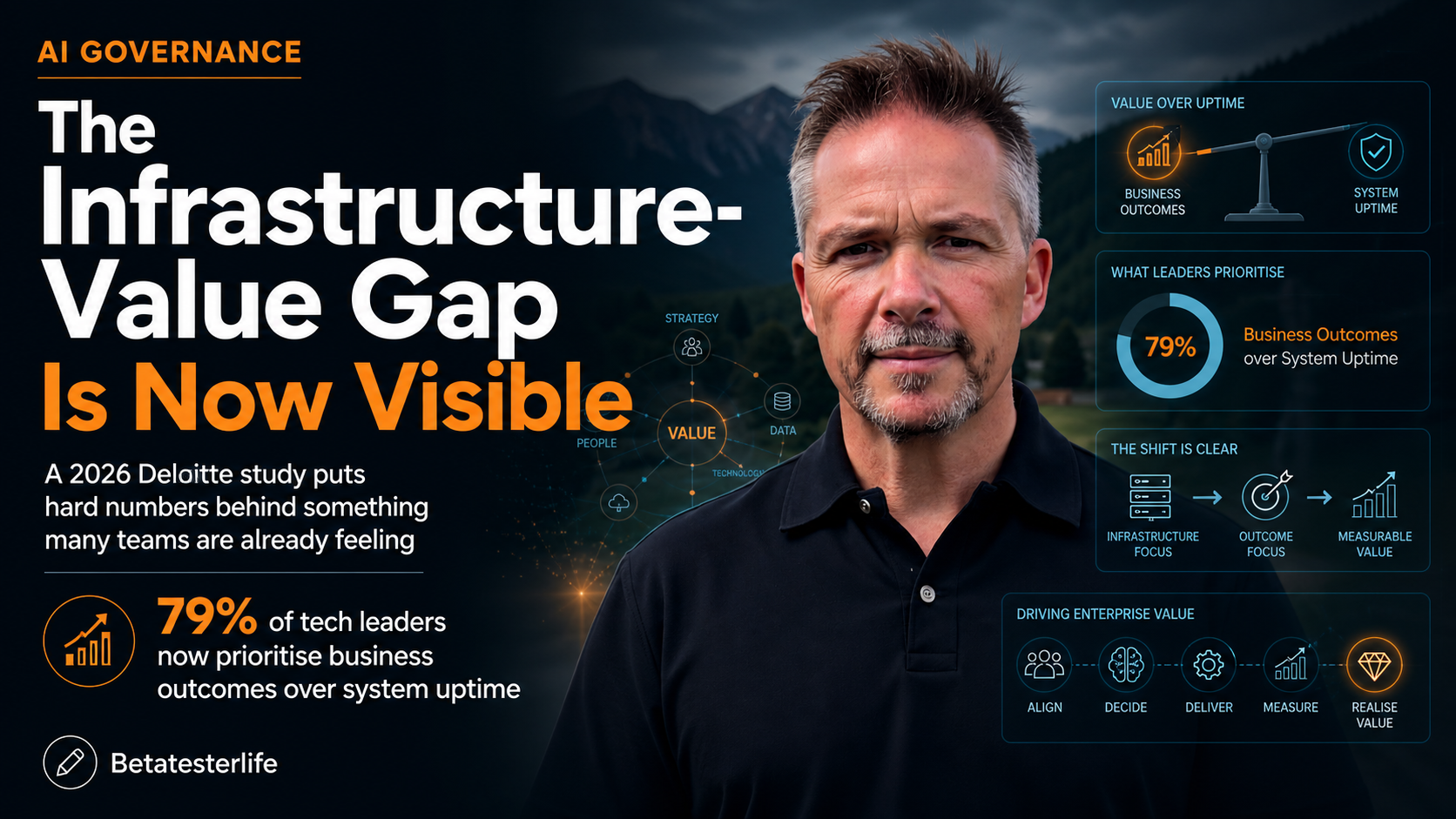

A Deloitte 2026 study puts hard numbers behind something many teams are already feeling:

- 79% of tech leaders now prioritise business outcomes over system uptime

- 42% report zero ROI from AI investments

- The primary cause: “structural lag”

This is not a tooling issue.

It is not a talent shortage problem.

It is a systems design failure at the enterprise level.

We are watching the shift from operators (keep systems running) to orchestrators (ensure systems deliver value).

The big idea: uptime vs. outcome

For years, reliability engineering has been framed as:

“Keep the system available.”

That framing is now insufficient.

Because the system can be 100% available and still delivering zero business value.

The reframing

- Old model:

Reliability = uptime, latency, error rates - New model:

Reliability = business process continuity under change

What changed?

AI, automation, and platformisation have introduced:

- More dependencies across systems

- Faster decision cycles

- Higher coupling between tech and business logic

This means failure is no longer:

“The server went down”

It is:

“The business outcome did not materialise”

The Infrastructure-Value Gap

This is the gap the Deloitte study exposes.

You can visualise it as two parallel systems:

| Layer | What it optimises | Typical metrics |

|---|---|---|

| Infrastructure layer | Stability & performance | Uptime, MTTR, throughput |

| Value layer | Business outcomes | Revenue impact, cycle time, decision quality |

The problem

Most organisations have:

- Mature infrastructure metrics

- Immature value metrics

- Weak linkage between the two

So they can answer:

“Did the system work?”

But not:

“Did the system matter?”

Structural lag (the real constraint)

“Structural lag” is not about people being slow.

It is about org design not keeping pace with capability.

Where it shows up

- Delivery pipelines optimised for output, not outcome

- Features shipped ≠ value realised

- Funding models locked to projects, not products

- AI gets built, but not embedded

- Ownership fragmentation

- No single owner for end-to-end value flow

- Metrics misalignment

- Teams rewarded for uptime, not impact

Result

AI initiatives land into an environment that cannot absorb them.

So you get:

- Working models

- Clean dashboards

- Zero ROI

Operators vs. Orchestrators

This is the capability shift that matters.

Operator mindset

- Focus: system health

- Success: stability, predictability

- Scope: bounded (service, platform, component)

Orchestrator mindset

- Focus: value flow across systems

- Success: business outcomes under uncertainty

- Scope: end-to-end (customer → decision → action → result)

The difference in practice

| Scenario | Operator response | Orchestrator response |

|---|---|---|

| AI model deployed but unused | “System is live” | “Why is adoption failing?” |

| Data pipeline stable but slow decisions | “No incidents” | “Where is latency in decision-making?” |

| Feature shipped with low impact | “Delivered on time” | “Was the hypothesis wrong?” |

Practical application: closing the gap

You do not fix this with a new tool.

You fix it by redesigning how value flows.

1. Define reliability at the value level

Move from:

- Service Level Indicators (SLIs)

To:

- Value Level Indicators (VLIs)

Examples:

- Time from data → decision → action

- % of AI outputs actually used in decisions

- Business process completion rate under load

2. Align ownership to value streams

Stop optimising components.

Start assigning:

- End-to-end accountability for outcomes

- Named owners for value streams (not just systems)

3. Rewire feedback loops

Shorten the distance between:

- Deployment → usage → outcome → learning

If you cannot see whether something worked:

You are not doing AI. You are doing theatre.

4. Fund for persistence, not delivery

AI and transformation require:

- Continuous tuning

- Behaviour change

- Integration into workflows

Shift from:

- Project funding → product/value funding

5. Instrument the “last mile”

Most organisations measure:

- System performance

Few measure:

- Decision quality

- User trust

- Action taken

That “last mile” is where ROI is either realised or lost.

Where I disagree (slightly)

The narrative risks overstating that uptime is now secondary.

It is not.

It is table stakes.

If your systems are unstable:

- You cannot orchestrate anything

But if you stop at stability:

- You will be replaced by those who can translate stability into value

The pattern to watch

The organisations that will outperform over the next 3–5 years will:

- Treat infrastructure as a means, not an end

- Design for value flow, not system health alone

- Build leaders who can orchestrate across technical and business boundaries

The takeaway

The shift is already happening:

From keeping systems running

To making systems matter

The risk is not that your systems fail.

The risk is that:

They work perfectly — and still do not move the business forward.

If you are leading this shift

Ask three questions:

- Can we trace a direct line from system performance to business outcome?

- Who owns that outcome end-to-end?

- How fast do we learn if it is not working?

If those answers are unclear:

You are not facing a technology problem.

You are facing an orchestration problem.

Next post

How to define Value Level Indicators (VLIs) in practice — with examples from AI, data platforms, and operational systems.

Series note

This is part of Beta Tester Life — exploring where AI, DevOps, and human systems intersect. The focus is simple: move from intent → system → outcome without losing the human layer in between.

Leave a Reply